TL;DR: AI Adoption Issues Sound Familiar to Agile Practitioners

If you have spent the last twenty years arguing that velocity is not value, that adoption is not impact, that an Agile transformation is not a Jira migration, the Stanford AI Index 2026 will read like déjà vu: The technology is new. The failure mode, the AI spending trap, is not. The 88 percent of organizations that have adopted AI but cannot show an EBIT impact are the same organizations that adopted Scrum without learning empiricism, adopted DevOps without changing how they fund teams, and adopted product management without giving anyone product authority.

The economic data is the evidence. The interpretation is what you already know.

🎓 🇬🇧 The Claude Cowork Online Course — Available June 1-8 for $129

You have been prompting AI for months. The results are inconsistent, every conversation starts from zero, and the model forgets who you are. That is the ceiling of prompting.

The Claude Cowork Online Course teaches you to break through it: build Skills that encode your expertise, connect them to your tools, and assemble Agents who handle recurring work the way you would handle it yourself. No coding required.

What You Will Get:

✅ 8+ hours of self-paced video modules: Skills, Agents, delegation frameworks — ✅ Tested with a live BootCamp cohort (April 2026) — ✅ The A3 Framework: decide what to delegate and what to keep — ✅ Starter kit with folder structure, CLAUDE.md, and Skill templates — ✅ All texts, slides, prompts, graphics; you name it — ✅ Designed for the $20/month Pro plan — ✅ Lifetime access to the version you purchase — ✅ Claude Cowork Foundational Certificate.

👉 Please note: The course will be available for $129 from June 1 to 8, 2026! (After that, $199.) 👈

🎓 Join the Waitlist of the Course Now: Claude Cowork: Stop Prompting. Start Delegating. No Coding Required!

<!–

🇩🇪 Zur deutschsprachigen Version des Artikels: Der Übergang von Scrum zu POM definiert Rollen neu.

–>

🗞 Shall I notify you about articles like this one? Awesome! You can sign up here for the ‘Food for Agile Thought’ newsletter and join 35,000-plus subscribers.

🎓 Join Stefan in one of his upcoming Professional Scrum training classes!

In the Case of the AI Spending Trap, History Rhymes Again

The 1850s gold rush produced a saying that has outlived its century: in a gold rush, sell shovels. Roughly 300,000 people poured into California in search of gold; unsurprisingly, most lost money. Sam Brannan, San Francisco’s first millionaire, ran the canonical shovel store (his bigger fortune came later from real estate). Wells Fargo was founded in 1852 to move the gold. The Big Four (Stanford, Huntington, Crocker, Hopkins) were Sacramento merchants who served miners before pivoting to build the transcontinental railroad with federal land grants. The actual 1850s pattern: mining-supply merchants became infrastructure barons. Most diggers became wage laborers for hydraulic mining companies by the mid-1850s.

Substitute compute for picks, frontier model labs for prospectors, and hyperscalers for shovel sellers. You have a reasonably accurate map of the AI market in April 2026.

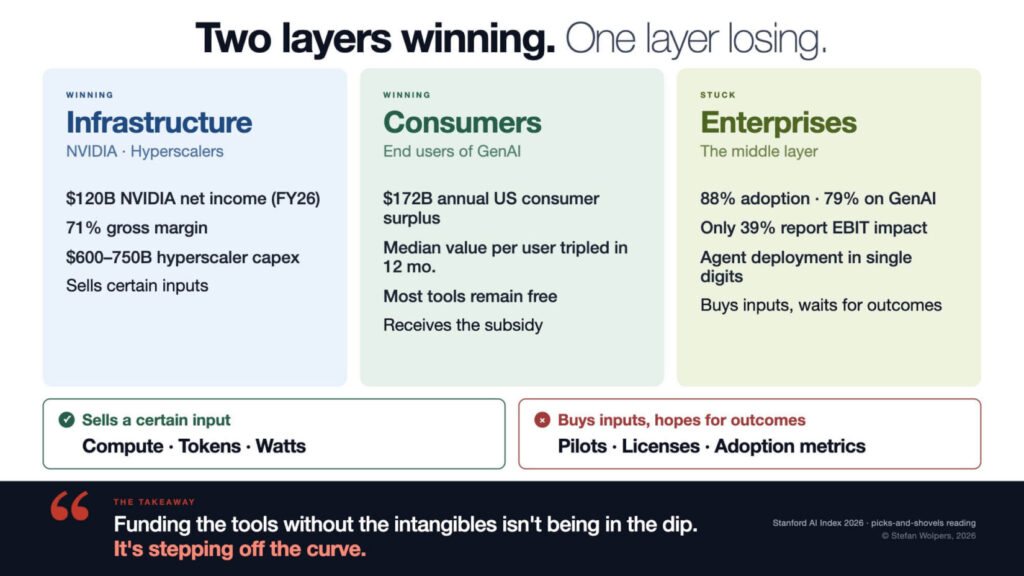

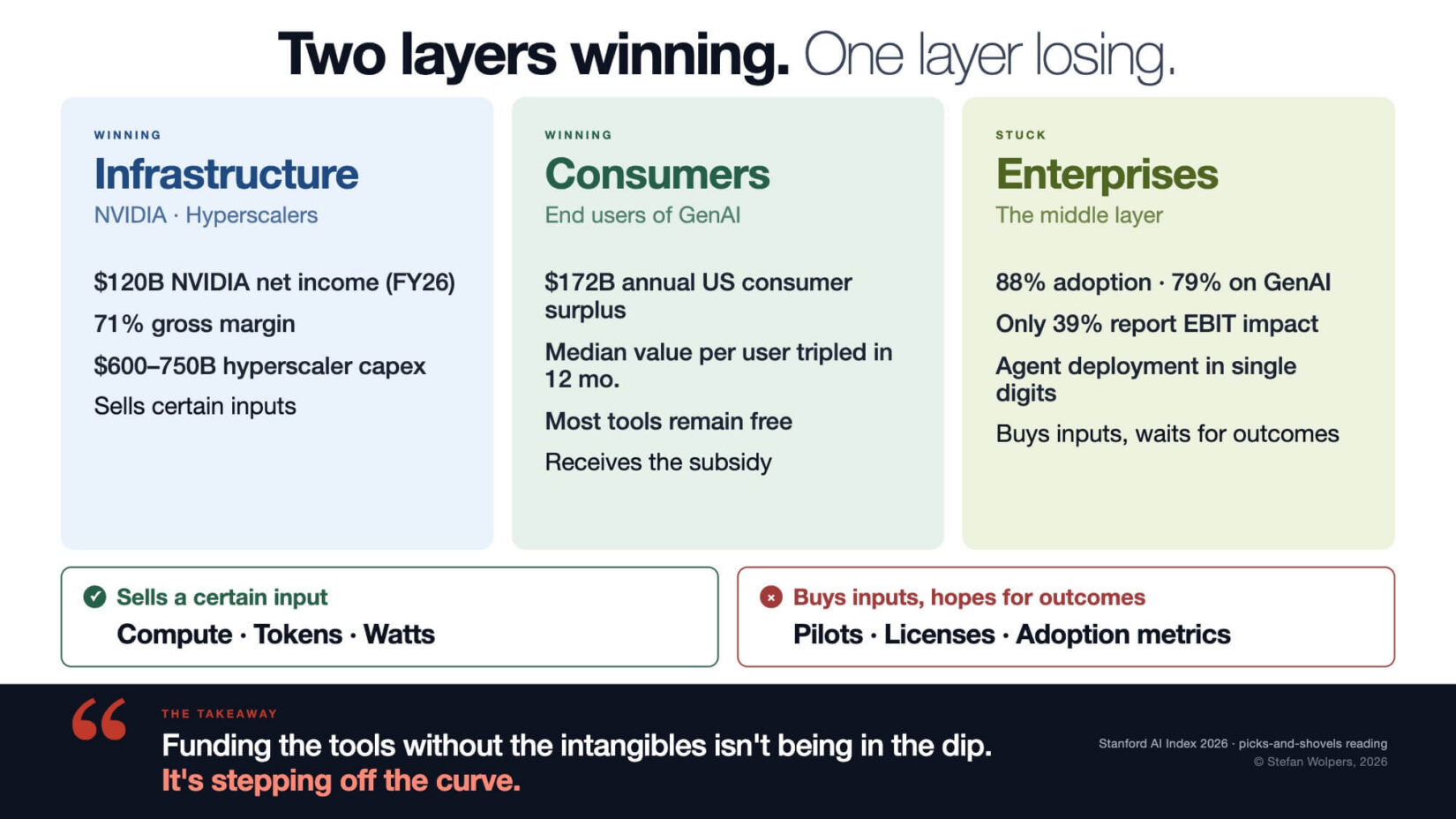

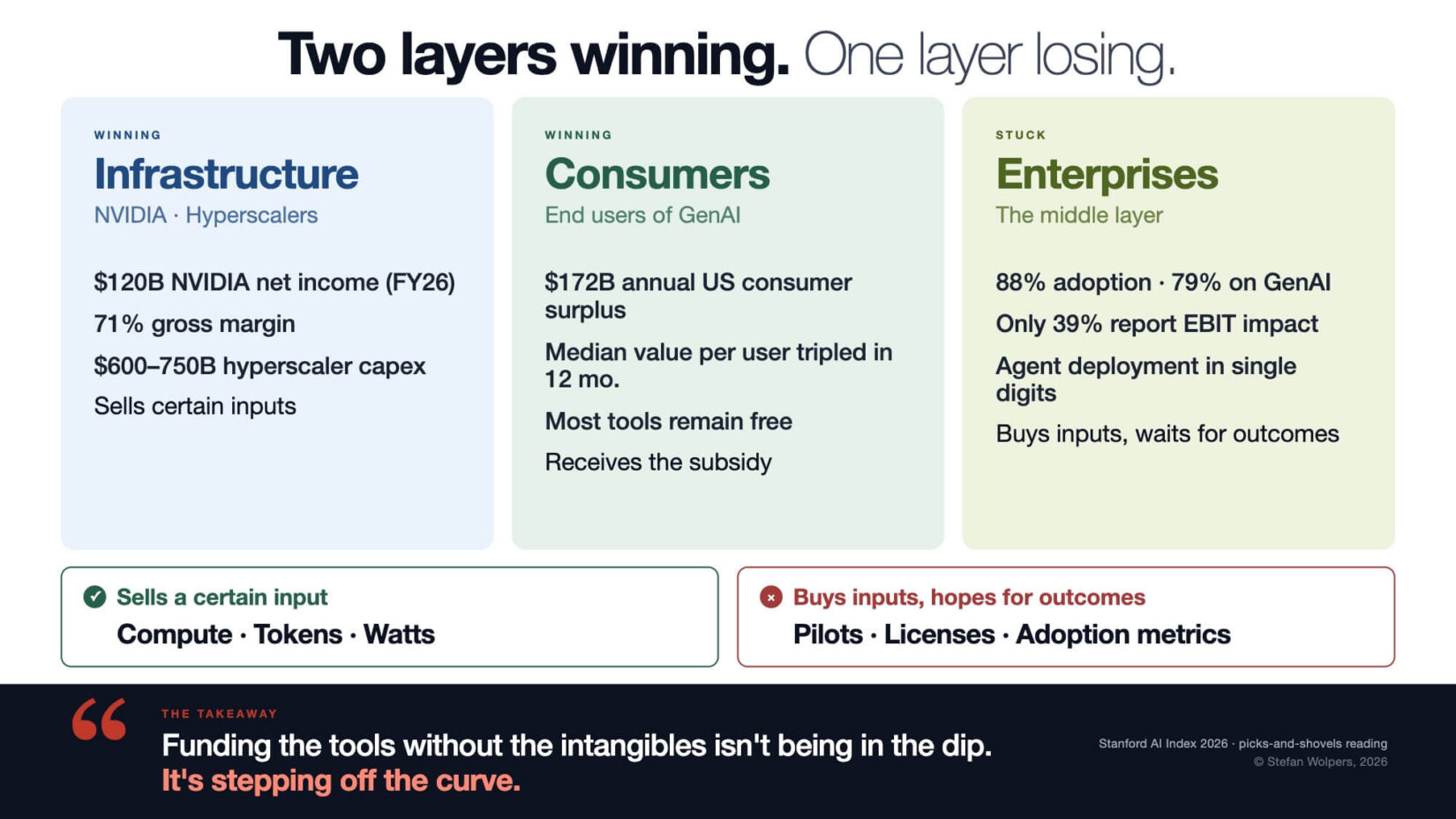

The Stanford AI Index Report 2026 landed 2 weeks ago. Read it carefully, and the picks-and-shovels pattern is sharper than the press coverage suggests. The infrastructure layer is winning. Consumers are winning, in dollar terms that most people have not measured. Enterprises are stuck.

Two layers winning, one layer losing. That is the story of the AI spending trap.

Infrastructure Providers and Consumers Are Winning. The Enterprises in the Middle Are Not.

Start with the infrastructure layer. NVIDIA earned $120 billion in net income in fiscal year 2026 (SEC filings, fiscal year ending late January 2026). That is more than the combined annual revenue of every frontier model lab on the planet. OpenAI, Anthropic, xAI, and the rest add up to roughly $55 billion in run rate. Hyperscaler capital expenditure on AI infrastructure is projected at $600 to $750 billion in 2026 across Microsoft, Google, Amazon, Meta, and Oracle. Google alone reported above $150 billion in capex for 2025. The Stanford report tracks $285.9 billion in US private AI investment in 2025, more than 23 times the $12.4 billion in China.

So far, this matches everyone’s mental model.

Now look at the second layer: the consumer. Brynjolfsson and colleagues (Stanford AI Index 2026, Chapter 4) ran online choice experiments on roughly 3,400 US adults across two waves in 2025 and early 2026. They asked one question: how much would you need to be paid to give up access to all generative AI tools for one month? Estimated US consumer surplus from generative AI hit $172 billion annually by early 2026, up from $112 billion a year earlier. The median value per user tripled in twelve months. Most of these tools remain free.

The consumer is capturing real value. The measure is willingness-to-accept compensation, not productivity, and most of it never shows up in revenue figures because the frontier model companies give the product practically away.

Now look at the third layer: the enterprise. Stanford 2026 reports that 88 percent of organizations now use AI in at least one business function, up from 78 percent in 2024 (drawing on McKinsey’s State of AI 2025 survey of 1,993 respondents). Seventy-nine percent specifically use generative AI, up from 71 percent. Adoption is nearly universal. So is the absorption ceiling. Productivity gains range from 14 to 26 percent in customer support and software development, with weaker or negative effects in tasks that require more judgment. AI agent deployment, the thing every vendor pitched as the unlock in 2025, sits in the single digits across nearly every business function. The McKinsey reading on revenue: 39 percent of organizations report an EBIT impact from generative AI, and most of those report it at under 5 percent.

And then there is the study that should have put an end to the perception debate. METR (the Model Evaluation and Threat Research lab) ran a randomized controlled trial in early 2025 on 16 experienced open-source developers contributing to large, mature codebases (with an average of 22,000 GitHub stars and more than 1 million lines of code). The trial covered 246 real issues, used randomized AI-allowed versus AI-disallowed assignment, and paid participants $150 per hour. The result: developers were 19 percent slower with AI tooling than without it.

The same developers expected a 24 percent speedup beforehand. After the study, they still believed they had been about 20 percent faster. The perception gap is a durable insight. Engineers feel faster with AI even when measurements show they are slower. So do their managers.

Three layers, three different value-capture profiles. The infrastructure providers and the consumers are doing fine. The companies in the middle, which spent the money buying the picks, are not. If corporate patience were abundant, this momentary situation would not be problematic. However, the financial industry has different plans, and now we are entering the AI spending trap.

The agile practitioner reading this is having a specific experience. The 88 percent adoption / 39 percent EBIT-impact gap is the same gap the State of Agile reports have shown year after year: most organizations claim Agile transformation, few report measurable business outcomes from it. Standish Group CHAOS data, going back two decades, has documented a similarly stubborn pattern: software project success rates have hovered around 29 percent across reporting periods, with agile approaches outperforming waterfall by roughly three to one but still leaving most projects in the challenged or failed bucket. Same shape, different decade. The technology is installed. The work does not get redesigned. The outcomes do not arrive. AI is the third or fourth time we have run this experiment.

Cannot see the form? Please click here.

The Recursive Margin Loop

NVIDIA’s 71 percent gross margin and OpenAI’s 33 to 40 percent are not the result of competitive vigor on one side and weakness on the other. They are the result of each company’s position in the stack.

Trace the customer dollar. The customer pays OpenAI for an API call. OpenAI pays Microsoft for Azure compute to serve the call. Microsoft pays NVIDIA for the GPUs running the workload while keeping its own margin on top. NVIDIA pays TSMC for the silicon. Each layer up the stack captures less of the dollar than the layer below. The model layer occupies the retailer position, and retailers run thin margins on volume.

The picture sharpens when you add the temporal dimension. Frontier model labs are not yet cash-flow businesses. They are running large, capital-intensive customer-acquisition phases funded by venture capital and hyperscaler equity-for-credits deals (Microsoft’s $13 billion into OpenAI, Amazon’s $8 billion into Anthropic, the Stargate joint venture). Those dollars do not stay at the lab. They convert into compute commitments and are recycled to NVIDIA and TSMC within the same fiscal year. The lab is a conduit, not the beneficiary. The wealth transfer is from venture capital to chipmakers, with frontier labs as the regulated utility in between, subsidizing the world’s intelligence experiments at a loss until either pricing power migrates up the stack or the funding stops.

Two caveats stop this from becoming a permanent verdict. Anthropic went from $1 billion to $30 billion in run-rate revenue between January 2025 and April 2026, suggesting that frontier labs can scale faster as their cost stack tightens. Per-token prices have collapsed by roughly 90 percent over the past 18 months, suggesting pricing power is migrating up the stack. Both signals are real.

For now, in April/May 2026, the configuration favors the layer selling a certain input (silicon and watts) over the layer selling an uncertain output (intelligence). That is the picks-and-shovels point with the mechanism made explicit.

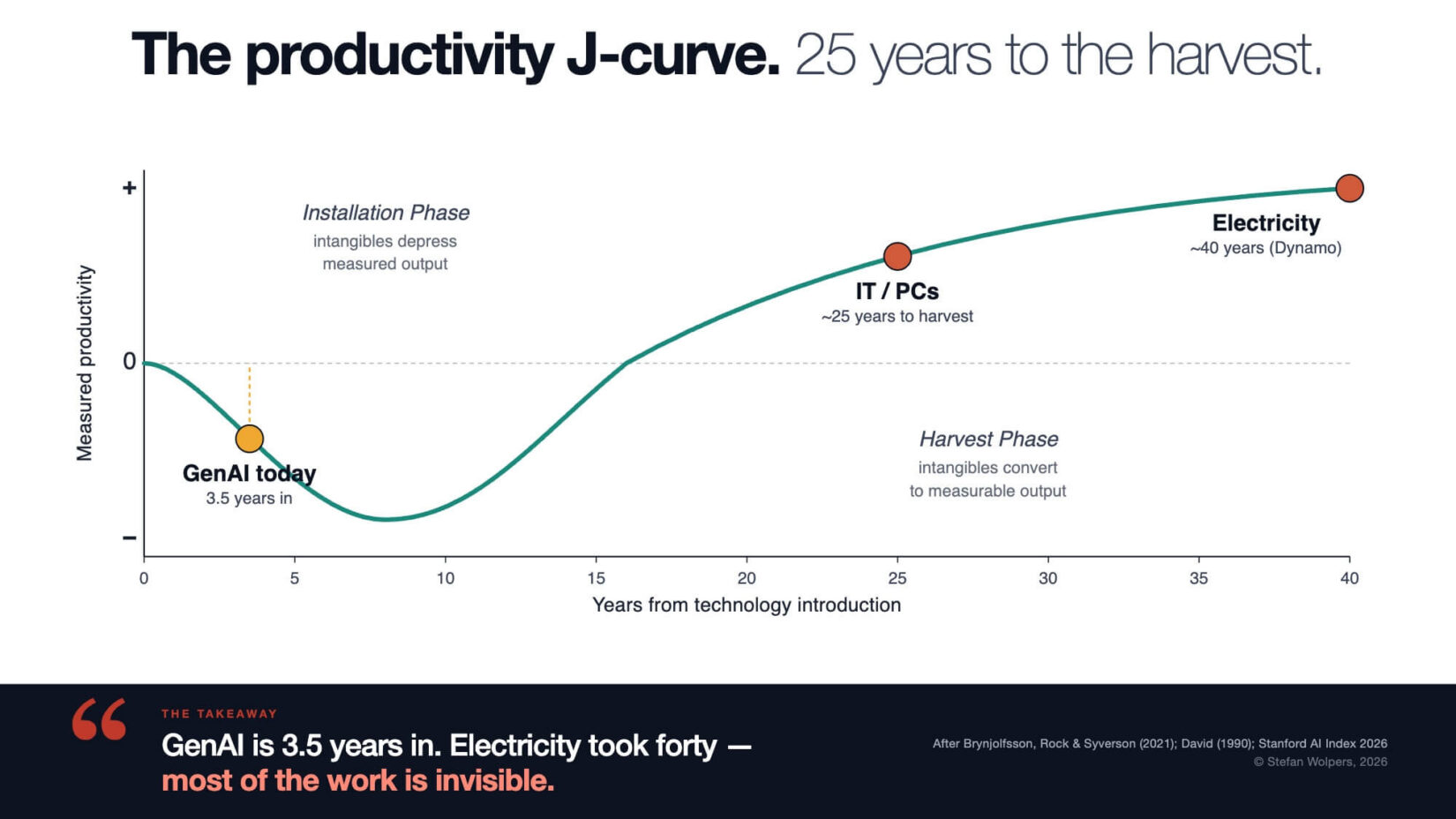

The Shape of GPT Adoption Is a J-Curve

Erik Brynjolfsson, Daniel Rock, and Chad Syverson published The Productivity J-Curve: How Intangibles Complement General Purpose Technologies in 2021 (American Economic Journal: Macroeconomics, vol. 13 no. 1). The thesis: General Purpose Technologies (GPTs) like electricity, the steam engine, and the personal computer do not just plug in and produce output. They require a massive investment in intangible capital: process redesign, retraining, organizational restructuring, and new business models. That investment is expensed, not capitalized. So measured productivity first dips while resources flow into intangibles whose output is not yet visible, then rises as those intangibles convert to measurable output.

(I know what “general purpose technology” sounds like in 2026. It sounds like a tech vendor’s marketing brochure. The term is older. It has been used in economic history for thirty years to describe steam, electricity, and IT.)

The shape, and where the major GPTs of the past 150 years sit along it:

The historical case study sits in Paul David’s 1990 paper The Dynamo and the Computer (American Economic Review Papers and Proceedings). Electric motors were widespread in factories from the 1890s. Productivity gains arrived in the 1920s. The lag was about forty years, and it was caused by something specific: factories kept their steam-shaft layouts after installing electric motors. Productivity broke loose only when factories were redesigned around the assumption of cheap distributed power.

Brynjolfsson coined the phrase productivity paradox for IT in 1993. The IT productivity boom arrived in 1995, 25 years after the invention.

The agile coach reading this has worked the same curve on a smaller scale. Every honest agile transformation runs through a measurable dip. Teams learning Scrum are slower for two quarters because they are unlearning command-and-control reflexes and relearning how to plan empirically. Teams adopting Continuous Delivery break things as they build the deployment pipeline. The CFO sees a productivity drop, panics, pulls the funding, and the transformation dies in the dip. The teams that survive are the ones whose leadership understood the curve before they started. The teams that die are the ones whose leadership funded the tools and skipped the intangibles. The Stanford 2026 enterprise data is the same pattern at a different scale.

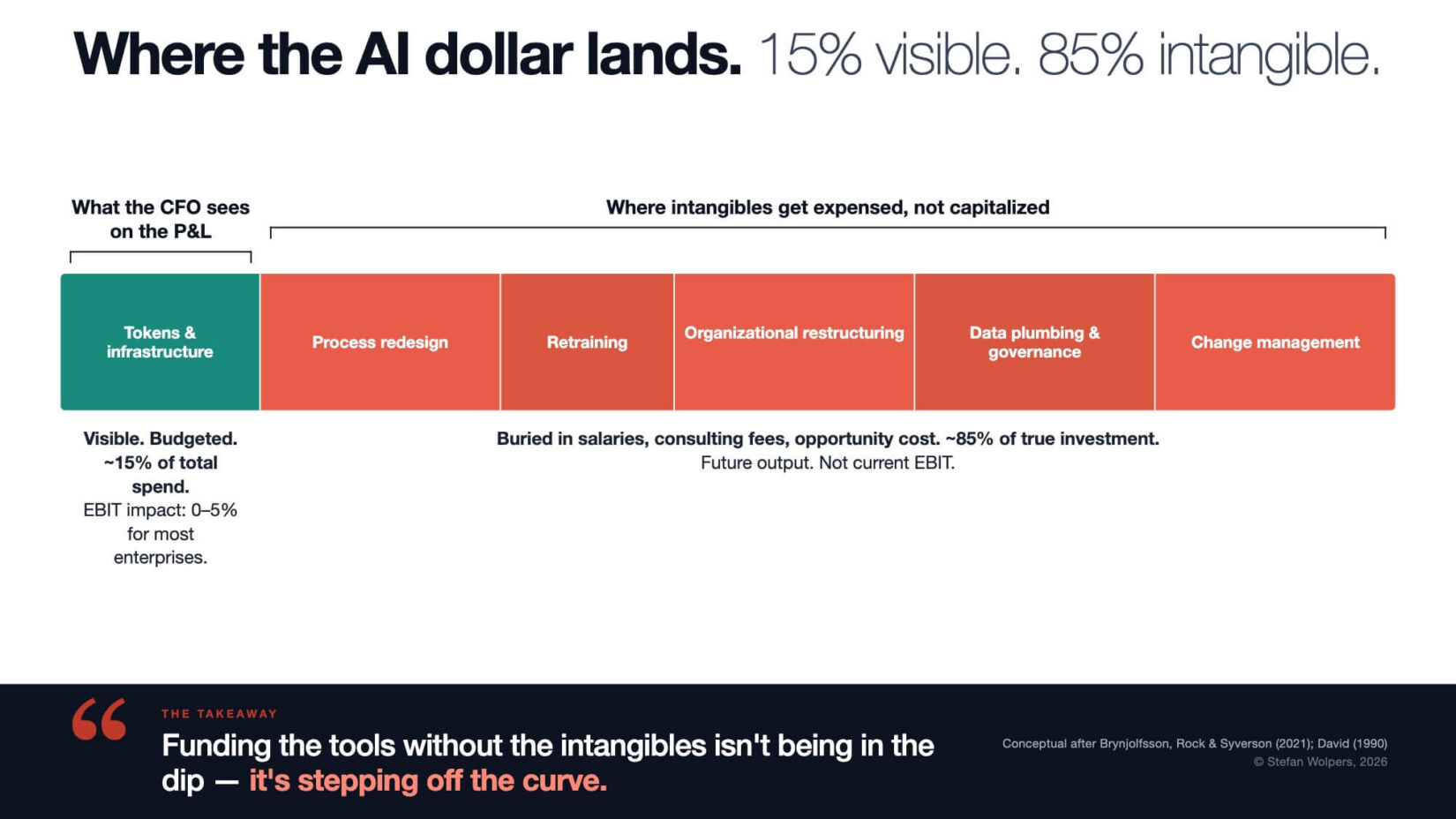

What sits inside the dip is not nothing. It is intangible-capital investment that the accounting system cannot see: process redesign (rebuilding workflows around the assumption of cheap inference), retraining (teaching practitioners to use the tool well rather than fluently), organizational restructuring (collapsing review chains, reassigning judgment work), data plumbing and governance (the unglamorous infrastructure that determines whether the tool produces signal or noise), and change management (the work of getting humans to adopt the new flow rather than work around it). Five categories, all expensed rather than capitalized, all paid for in the dip:

ChatGPT launched on November 30, 2022. We are three and a half years into the GenAI cycle. By every historical benchmark, we are barely in.

The dip is the geometry of GPT adoption, not a sign of failure.

A company that spent $40 million on AI in 2024 and shows zero EBIT impact is not necessarily failing. The deployment might be in a normally-shaped intangibles-investment phase, exactly where Paul David’s factories were in 1900. The risk is not being in the dip. The risk is being in the dip without the intangible-capital investment that produces the eventual rise. Companies that cut headcount from operations, fire institutional knowledge, and call that an AI strategy are not on the curve. They have stepped off it.

There is honest dissent on the size of the eventual prize. MIT’s Daron Acemoglu published a counter-thesis in Economic Policy in 2025, estimating that AI will add no more than 0.66 percent to total factor productivity (TFP, the economic measure of how much extra output an economy gets from the same amount of labor and capital, usually attributed to better technology and organization) over ten years, roughly one-twentieth to one-fiftieth of Goldman Sachs’ and McKinsey’s forecasts. Acemoglu calls AI a so-so technology: it brings sizable labor displacement but only modest productivity gains. Brynjolfsson disagrees by an order of magnitude. They both agree on the mechanism: the prize requires intangibles that have to be paid for. There is no off-the-shelf path.

That dissent is not an academic curiosity. It is a falsifiable test you can apply to your own pilots tomorrow. Run a Red Team exercise: assume Acemoglu is right. AI is a so-so technology. The eventual TFP gain over a decade is 0.66 percent, not 15 percent. Which of your current pilots survive that assumption? The pilots with a defensible theory of value (a specific process to redesign, a measurable outcome, a falsifiable hypothesis about cost or revenue) survive. The pilots dressed in J-curve clothing without that theory do not. They are output theater betting on an assumption you cannot afford to be wrong about.

Seven Failure Modes Practitioners Should Name

The Stanford 2026 data shows where productivity gains exist (in narrow, well-measured tasks like customer support and software development) and where they do not (in anything that requires sustained judgment). Most enterprise AI deployments fall into the second group. The failure modes below describe how that happens, mode by mode. The agile practitioner will recognize the pattern under each one, as the technology is new, but the anti-pattern is not.

1. Output theater: Counting AI features shipped instead of measuring outcome impact. A team that ships an AI feature without a baseline metric, a target, and a measurement window has not done AI. It has done an AI theater. The 88 percent organizational adoption rate in Stanford 2026 is mostly this. Adoption is not an impact. (The Scrum-era version of this anti-pattern was velocity worship: counting story points completed and calling it productivity. Same mechanism, faster tooling.)

2. Misallocation by visibility: Sales and marketing get the budget because they are visible to the C-suite. Back-office automation delivers better ROI but does not appear in the company’s off-site slide deck. The misallocation is rational from a career-incentive perspective. It is catastrophic from a value-creation perspective. (HiPPO-driven backlog with a new acronym.)

3. The Klarna trap: Klarna replaced about 700 customer service agents with an OpenAI-built chatbot in 2023, claimed $40 million in annual savings, then reversed in May 2025. CEO Sebastian Siemiatkowski announced the reversal, acknowledging that the company had focused too heavily on efficiency and cost, and that the resulting drop in service quality was not sustainable. Wholesale human replacement under-prices the verification, governance, and quality-recovery costs. The arc has repeated at McDonald’s (IBM drive-through canceled July 2024), Air Canada (chatbot liability ruling Moffatt v. Air Canada, February 2024), and Builder.ai (bankruptcy May 2025). (This is contract negotiation winning over customer collaboration. The Manifesto warned about this in 2001. Twenty-four years later, with chatbots.)

4. The illusion of velocity: Engineers and managers feel faster with AI, even when measurements show they are slower. METR is one signal of this. The structural consequence matters more. If developers feel faster while being objectively slower, the same hours produce more code with less reflection. That is technical debt accumulating per hour rather than per Sprint. Code review burden, defect density, and the half-life of design decisions all move in the wrong direction at the same time. The remedy is the same one DORA has been preaching for more than a decade: trust the metrics, not the vibes. If you do not have throughput, lead time, change failure rate, and time-to-restore data, you do not have an AI productivity story. You have a feeling, and a tech-debt bill is arriving on a delay. (Self-reported productivity has been the most reliably wrong metric in software for forty years. AI did not fix it.)

5. The 22-to-25 paradox: Stanford 2026 reports that US software developers aged 22 to 25 saw employment fall nearly 20 percent from 2024, in the same field where AI’s measured productivity gains are clearest (14 to 26 percent improvements). The productivity gain per developer is real. The substitution against entry-level talent is also real. Companies are running both effects at once and calling it a productivity win. The pipeline cost shows up in three to five years, when the missing entry-level cohort would have been the next generation of senior engineers. (Optimizing the current Sprint at the cost of the next year. Every agile coach has watched a CFO make this trade. It always costs more than the spreadsheet predicts.)

6. Liability blindness: The Moffatt v. Air Canada ruling at the British Columbia Civil Resolution Tribunal in February 2024 established that companies are legally responsible for what their chatbots tell customers. Enterprises rarely model legal exposure at the time of procurement. They should. (Governance debt. Definition of Done with the legal column missing.)

7. Token burn without observability: Deloitte’s January 2026 AI Token Economics for CFOs survey (n=550) reports a healthcare enterprise whose token usage grew 8 to 10 percent per month and produced more than $6 million in unplanned annualized cost increases before finance had visibility into the driver. Per-seat licensing for AI agents is structurally broken. Agentic workflows consume 5-30 times more tokens per task than chatbots. Reasoning models can consume more than 100 times the compute per query of a single inference call. Usage-based metering with outcome attribution is the structural replacement. (Unmonitored work in progress, with a metered API on the back end. Every Kanban practitioner knows what unbounded WIP does to a system.)

The AI Spending Trap Is Friendly Territory for Agile Practitioners

Strip the AI spending trap analysis down: Infrastructure providers are profiting because they sell certain inputs (compute, tokens, electricity) regardless of whether their customers’ AI projects succeed. Consumers are getting real value because the product is given away. Enterprises are losing because they are buying certain inputs and hoping that outcomes follow.

The picks-and-shovels insight is older than gold rushes. The teams that get rich in any technology adoption cycle are the ones that already had a clear theory of value before the rush started. The agile principle is the same: build the smallest thing that produces a measurable outcome, measure it, and decide whether to continue. AI did not change that. It just made the wrong way faster.

Agile practitioners have been arguing this for two decades. Most of the time, we have lost the argument inside our organizations. The Stanford 2026 enterprise data is, in this reading, not an AI problem at all. It is a generation of executives buying technology with output-thinking and being surprised when outcomes do not follow.

AI Spending Trap — Conclusion

A fool with an LLM is still a fool. AI amplifies what you already are: competent practitioners become more effective; incompetent practitioners produce confident garbage faster. The tool is neutral. Your expertise is not.

So here is the Monday morning test. Open your last quarterly board deck. Find the slide on AI strategy. Count the verbs. How many describe outputs (deployed, launched, integrated, shipped) versus outcomes (reduced cycle time by X, recovered Y dollars in unplanned costs, increased customer NPS by Z points)?

If the verbs are mostly outputs, you are not in the dip of the J-curve. You are off the curve entirely. The work of the intangibles has not started. But the bill from your token provider has.

Related Articles: AI Spending Trap

Stop Telling Professionals How to Do Their Job — Commander’s Intent at Work

Three AI Skills to Sharpen Judgment

Why Agile Practitioners Should Be Optimistic for 2026 (Part 1): You Have Already Survived This

Why Agile Practitioners Should Be Optimistic for 2026 (Part 2): AI for Agile Practitioners

The A3 Framework: Assist, Automate, Avoid

👆 Stefan Wolpers: The Scrum Anti-Patterns Guide (Amazon advertisement.)

📅 Training Classes, Workshops, and Events

Learn more about the AI Spending Trap with our AI and Scrum training classes, workshops, and events. You can secure your seat directly by following the corresponding link in the table below:

| Date | Class and Language | City | Price |

|---|---|---|---|

| 💯 🇩🇪 May 19-20, 2026 | GUARANTEED: Professional Scrum Product Owner Training (PSPO I; German; Live Virtual Class) | Live Virtual Class | €1.299 incl. 19% VAT (If applicable.) |

| 💯 🇬🇧 May 28 to June 25,2026 | GUARANTEED: AI4Agile BootCamp #7 (English; Live Virtual Cohort) | Live Virtual Cohort | €499 incl. 19% VAT (If applicable.) |

| 🖥 💯 🇬🇧 June 1,2026 | GUARANTEED: Claude Cowork: Stop Prompting. Start Delegating. (English; Self-paced Online Course) | Self-paced Online Course | $129 incl. 19% VAT (If applicable.) |

| 💯 🇬🇧 June 10-July 2,2026 | GUARANTEED: Claude Cowork BootCamp #2 (English; Live Virtual Cohort) | Live Virtual Cohort | $249 incl. 19% VAT (If applicable.) |

| 🇩🇪 June 30 to July 1, 2026 | Professional Scrum Product Owner Training (PSPO I; German; Live Virtual Class) | Live Virtual Class | €1.299 incl. 19% VAT (If applicable.) |

| 🖥 💯 🇬🇧 July 1,2026 | GUARANTEED: AI 4 Agile Course v3 — Master AI for Agile Practitioners (English; Self-paced Online Course) | Self-paced Online Course | $149 incl. 19% VAT (If applicable.) (Before: $249, incl. Update to v3.) |

See all upcoming classes here.

You can book your seat for the training directly by following the corresponding links to the ticket shop. If your organization’s procurement process requires a different purchasing approach, please contact Berlin Product People GmbH directly.

The post The AI Spending Trap: Why Adoption Outpaces Outcomes appeared first on Age-of-Product.com.